Let us now run a job using the Python shell provided by Apache Spark.

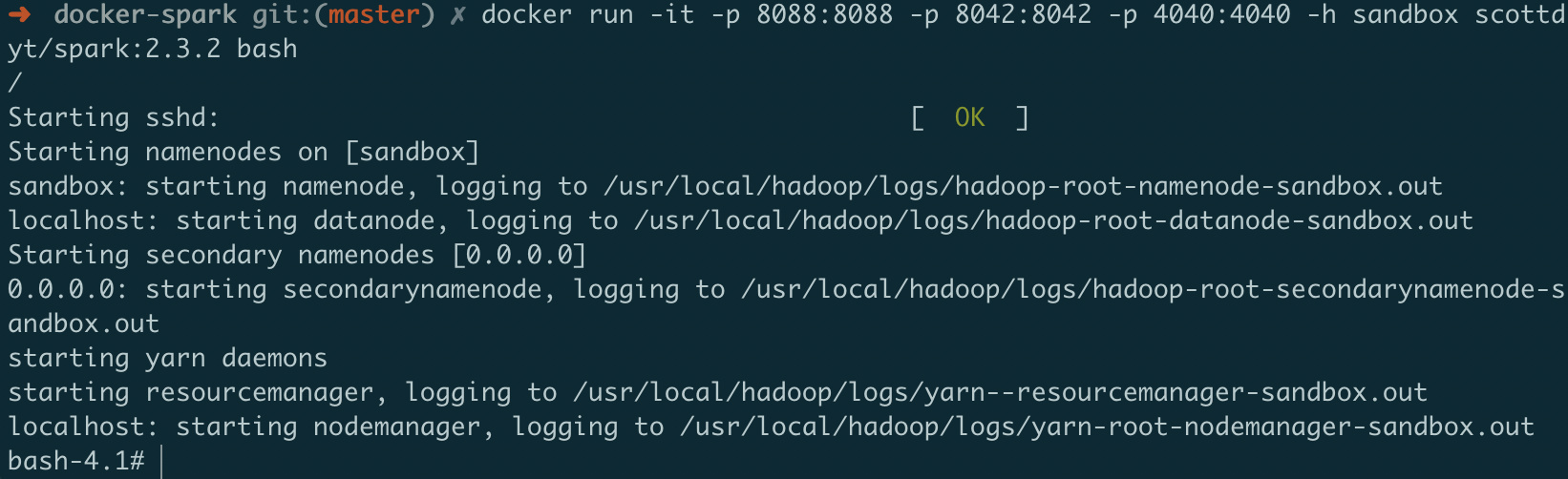

Observe that you can see the slave under Workers, along with the number of core available and the memory. Hit the status page again at port 8080 to check for it. spark-2.1.0-bin-hadoop2.7/sbin/start-slave.sh spark://:7077Īnd with that your cluster should be functioning. Slave StartupĮnsure that JAVA_HOME is set properly and run the following command. Copy it down as you will need it to start the slave. The URL highlighted in red is the Spark URL for the Cluster. Once the master is running, navigate to port 8080 on the Node’s Public DNS and you get a snapshot of the cluster. This is where you submit jobs, and this where you go for the status of the cluster. Let us now fire up the Apache Spark master. export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64/Īnd that’s it for installation! The friendly folks at Apache Spark have certainly made our lives easy, haven’t they? 4.

mkdir ~/serverĪfter unpacking, you have just one step to complete the installation: JAVA_HOME.

INSTALL APACHE SPARK 2.2.1 BREW INSTALL

We have chosen to install Spark with Hadoop 2.7 (the default).ĭownload and unpack the Apache Spark package. At the time of the writing of this article, the latest version is 2.1.0. Next head on over to the Apache Spark website and download the latest version. Install Java on the node using the ubuntu package: openjdk-8-jdk-headless sudo apt-get -y install openjdk-8-jdk-headless 3.2. Once the instance is up and running on AWS EC2, we need to setup the requirements for Apache Spark. Follow the steps in that guide till the instance is launched, and get back here to continue with Apache Spark. The procedure is the same up until the cluster is running on EC2. Setting up an AWS EC2 instance is quite straightforward and we have covered it here to demonstrate setting up a Hadoop Cluster. Such a setup is good for getting your feet wet with Apache Spark on a laptop. For the purposes of the demonstration, we setup a single server and run the master and slave on the same node.

INSTALL APACHE SPARK 2.2.1 BREW HOW TO

In this article, we delve into the basics of Apache Spark and show you how to setup a single-node cluster using the computing resources of Amazon EC2.

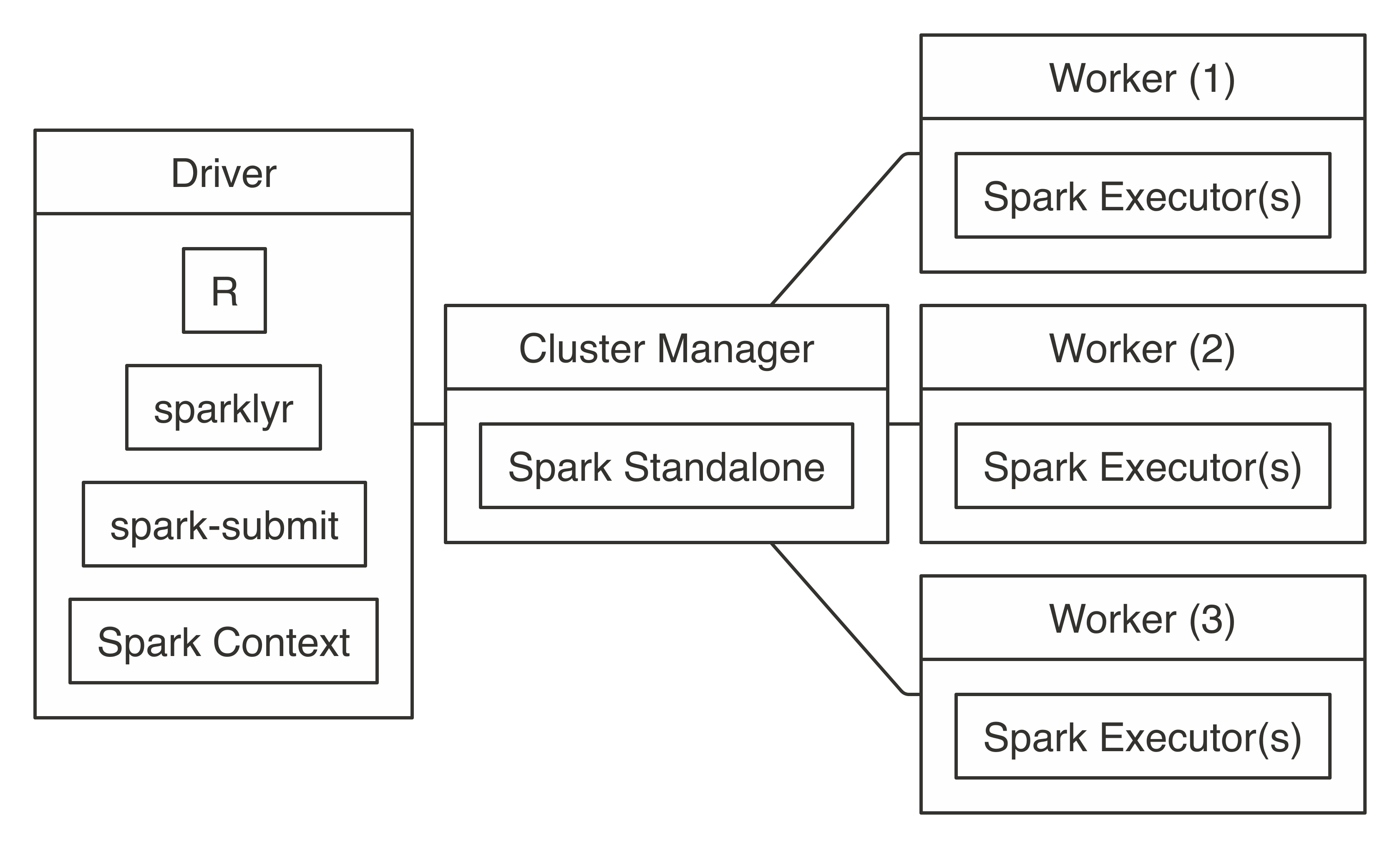

You have a data processing job working nicely with Java 8 Streams? But need more horsepower & memory than a single machine can provide?Apache Spark is your friend. It provides support for many patterns similar to the Java 8 Streams functionality, while letting you run these jobs on a cluster. While re-using major components of the Apache Hadoop Framework, Apache Spark lets you execute big data processing jobs that do not neatly fit into the Map-Reduce paradigm. Apache Spark is the newest kid on the block talking big data.

0 kommentar(er)

0 kommentar(er)